One of our missions is to demonstrate that success on the web does not require having the largest budget. Instead, it requires slowing down, being thoughtful, and working with people who know how to get results out of the web.

Too many think tanks seem to be working with designers who care more about making things pretty, or developers who care about making things technically efficient, rather than working with usability experts who care about making a website into a tool that a human being can actually use.

This is why even groups with incredible, gargantuan, aircraft-carrier-group-sized budgets have internal search that is an absolute embarrassment.

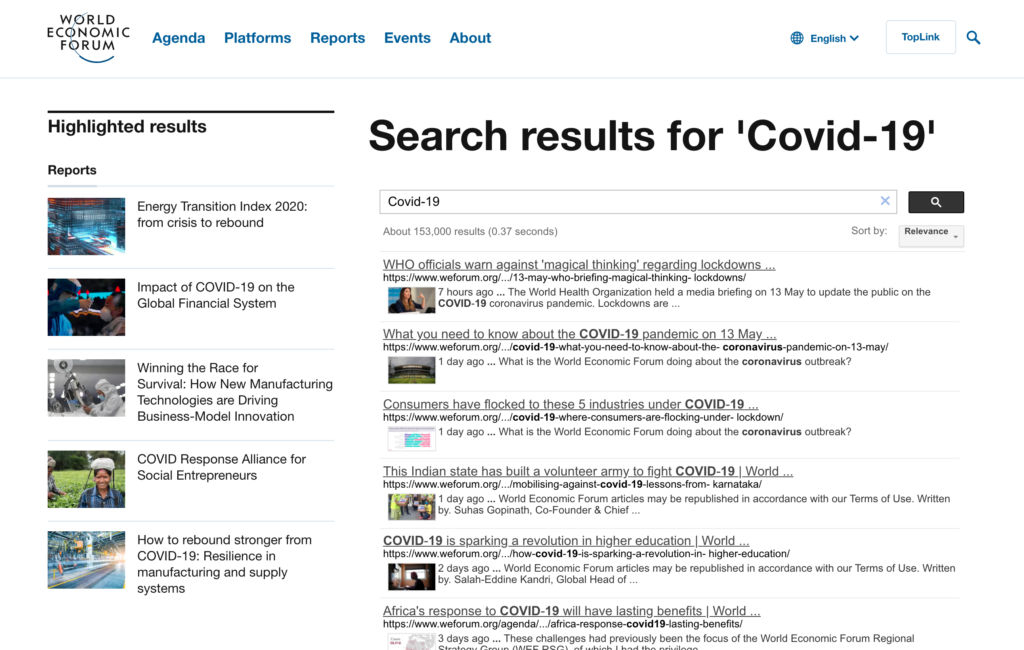

Let’s start with the hugest of the huge. The World Economic Forum’s most recently public disclosure places their annual expenditures at nearly $500 million. Yet this how their site search looks:

What’s wrong with these search results?

- No autocomplete. There are no suggestions like “Covid-19 model” or “Covid-19 WHO” or even correction of misspelling, which are common, especially for words like “hydroxychloroquine.”

- Results cannot be filtered. There’s no way to see only items published in the last week, or only event videos, or only reports. There’s no way to single out a particular author’s work. Filters would be handy considering my search for “Covid-19 produced 153,000 results.” That list needs winnowing.

- Sorting is broken. The only sorting options offered are “Relevance” and “Date” and half the time selecting “Date” resulted in an API error.

The problem here is not that the World Economic Forum can’t afford a decent search experience, it’s that they don’t care to provide one. Throw up a quick and dirty implementation of Google site search and let users struggle.

Users Want Well-Implemented Search

Some of this is self-fulfilling prophecy. We hear this all-too often when talking to our think tank clients:

Users don’t really use our search, so we don’t invest in it.

We understand this thinking. Think tanks have to invest their dollars strategically, but this analysis gets the causality backwards.

If you don’t invest in search, it will work poorly, so users won’t use it.

I know the causality works this way because it’s born out by the research. When sites have simple, visible search functionality, users buy more products—or in the case of think tanks, download more research. A Baymard Institute study of e-commerce search found that sites with better search delivered better results to users (as in they closed more sales), and the study made this important observation:

As the poor overall state of search is present within all industries, most sites will have an opportunity to create a true competitive advantage by offering a vastly superior search experience compared to that of their competitors.

This is 100% true when it comes to think tanks. To prove this, let’s look at some well-known groups and how they perform in search.

Think Tanks with Search Problems

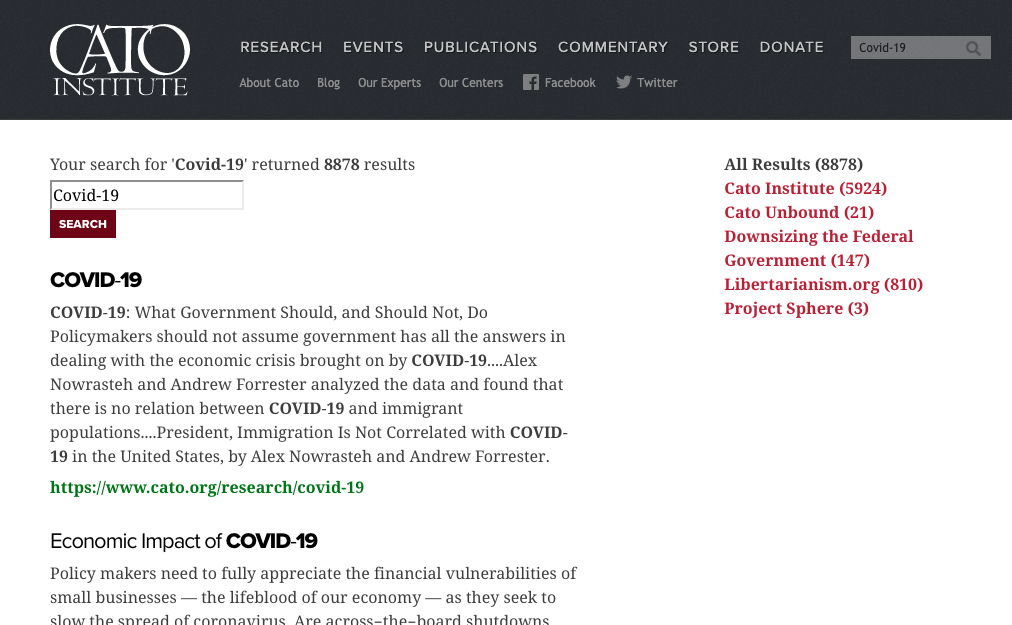

Let’s first look at the problem children. Cato, Hudson, and Heritage are all highly-respected groups with great research that were it findable, would serve to make the world a better place, yet each are failing at search in pretty significant ways:

The Cato Institute

Cato offers no filtering by relevant topics, no filtering by author, no filtering by date, and only shows users 10 results at a time. Content categories like “Cato Unbound” or “Downsizing the Federal Government” don’t matter to most journalists or policymakers. Internally relevant content distinctions should be replaced by commonly recognized policy topics.

The Hudson Institute

The Hudson Institute offers three results at a time, no filtering, and no sorting options.

The Heritage Foundation

A journalist might be looking for information on how Covid-19 effect defense policy, public schools, or state unemployment programs, but The Heritage Foundation offers no filters for the nearly 50 policy areas they cover. Instead, users are given filters of report formats that they probably don’t understand. Does the average journalists or hill staffer know the difference between “Heritage Impact,” “Heritage Explains,” or “Report?” Not likely. If formats like these are offered, they need to be shown with explanations. On desktop, this should be done with tooltips that show up when users hover over a format.

Think Tanks with Great Search

Now let’s look at two examples of think tanks that get search right:

The Reason Foundation

What is Reason doing right in this example?

- Showing the number of results. This not only shows users that you have a lot of content to begin with, it helps them understand if they should keep filtering. In this case, I got down to 3 results after filtering by date and topic, leading me directly to the content that’s most relevant.

- Offering all content attributes as filters. If you associate a piece of content with a topic, author, or publication type, make those search filters. Notice how Reason puts Publication Types last on their list of filters, recognizing that format usually doesn’t matter as much to search users.

- Using “load more” instead of pagination. Before I filtered down on my list, Reason showed the number of results I had an offered an option to “Load More” at the bottom of the list. This is better than pagination as it invites users to commit only to expanding the current page, rather than beginning a journey into deeper and deeper pages. This may seem like a distinction without a difference, but loading another page is more of a commitment in the minds of users who are looking to get results fast, rather than get lost down blind alleys.

- Filters work as checkboxes. This allows users to check multiple filters and easily turn filters on and off. Offering the “Clear” function is also key here, as it allows users to restart the filtering process without scrolling up and down the list of filters.

- Attributes are distinct. I can easily scan by title, data, or author, the attributes that most users care about. It’s crucial to make all attributes visually distinct through difference in font size, color, typeface, etc.

Reason could improve their page by loading more results and also making filtering persistent, so that once a user clicks an individual result and then clicks the back button, the same list is presented.

Pew Research Center

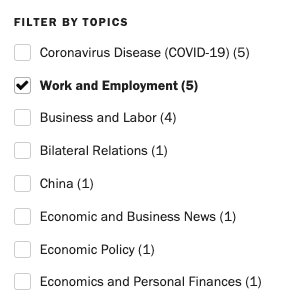

The Pew Research Center also nails search with a good-looking, thoughtfully design search filtering page:

Like Reason, Pew shows the number of results prominently, offers attributes as filters, uses checkboxes for most filters, and presents results in an easily scannable list.

Pew offers pagination, rather than “Load More,” which we think is a mistake, but it does keep results persistent, so back button functionality works when dipping in and out of search results.

Pew also offers two really great features that Reason does not:

- Visual filter management. By stacking up filters at the top of the search results, Pew uses conventions users understand from e-commerce and makes removing filters intuitive.

- Previewing results numbers. This guides users to filters that will help to narrow their results quickly. Pew also grays out some filter options, indicating when a filter has eliminated some content from the results.

Bigger Picture: Embracing Inbound

Larger groups may not value search as much because they believe their content gets seen anyway. They reach journalists through their large communication teams, reach Hill staffers through their government outreach teams, and place research directly in the hands of other researchers, often mailing it out as physical publications.

Social media too is a way of extending that old-school, “we’ve got a great list” mentality. Just as groups have thrived based on their direct-mail lists or rolodexes filled with friendly journalists, their social media followers become another means of broadcasting messages out. Same model, different mechanism.

It’s all part of the megaphone, outbound approach. But your website opens a new front, a new way of doing things. It’s not a megaphone, it’s a magnet.

By embracing search that works well—one part of making a website more usable—think tanks can embrace the inbound “magnet” model of marketing in addition to their well-establish outbound marketing efforts.

In this mode of thinking, research is still used as fodder for direct outreach to journalists, policymakers, and fellow scholars, but it’s given further purpose by populating search engine results and being permanently available (and hopefully discoverable) on your website.

I suppose this stuff is obvious—of course we understand we’re no longer in they days of relying solely on media outreach and pushing our content to our desired audiences.

But if that’s true, then why are so many think tanks website difficult to navigate, impossible to search, and poorly ranked on search engines?

The answer: it’s easier to keep doing the same thing then to change, especially if you’re still getting good-enough results.

Start Measuring Inbound

In order to take advantage of what the web really has to offer, think tanks need to start measuring their website performance and making it clear to their donors that this stuff matters.

So instead of measuring only outbound success indicators like:

- Op-Eds

- Media Citations

- Television/Radio Appearances

- Social Media Followers

Think tanks need to also measure inbound indictors like:

- Search Engine Ranking

- Organic Search Traffic

- Time on Site

- Pages per Visit

- Dwell Time

- Newsletter Sign-Ups

- Contacts Generated from Web Forms

Think tanks can take this even further by using service like CallRail to track how many phone calls were the result of visits to their webpage.

Only by measuring inbound marketing performance will think tanks start to invest in their website as much as they invest into outbound marketing methods.

We Can Help

If you want to learn more about optimizing search or any other part of your think tank website, you can call us for a free 20-minute consultation. We can help you identify problem areas and what should be addressed first to create the biggest return on your investment of time and money.

Don’t worry, this phone call won’t be “salesy.” Our call will be focused on learning about your goals and the problems you’re facing. That way, we can determine if our approach would be a good fit for your needs.