There are two ways to use AI in publishing right now.

One is to use AI to generate more pages. Scaled content, refreshed archives, listicles that position your own product at the top, comparison pages churned out at volume. Most of what gets called “GEO” falls here.

The other is to use AI to organize the pages you already have. Consistent tagging across a thirty-thousand-article archive. Clean entity connections to the real-world people, places, and concepts your writing is actually about. Schema markup that ties every piece to Google’s Knowledge Graph.

Only one of these is new.

Lily Ray’s vicious cycle

Lily Ray—VP of SEO & AI Search at Amsive and founder of Algorythmic—closed out SEO Week 2025 with a talk that should be required viewing for anyone selling publisher SEO right now.

Her point, after 15 years of watching the industry: SEO runs in a vicious cycle. Practitioners discover a tactic, scale it, see short-term gains, and then Google crushes it. Keyword stuffing, link schemes, article spinning, parasite SEO, programmatic pages and now AI content floods.

Each wave creates a gold rush, then the penalty hits.

Ray’s takeaway: the future belongs to strategies search engines can’t take away: authentic work, original research, strong brands. Things built on trust. One might even say things built on E-E-A-T.

I call this the SEO’s Hippocratic Oath. First, do no harm.

One way to test if you’re doing something harmful is to ask yourself if the change benefits the user. A corollary to that test is asking yourself if you’d make this change even if Google didn’t exist.

In a recent Substack post, Ray flagged five GEO tactics she sees burning brands right now:

- Scaling AI-generated content

- Artificially refreshing old articles with cosmetic AI rewrites

- Self-promotional listicles that put your own brand at #1

- “Summarize with AI” buttons with hidden prompt injection instructions

- Scaled alternatives and comparison pages

Four of these are the same old thing dressed up in a new jacket. The fourth is worse.

Four of these are just spam at a new price point

Look at tactics one, two, three, and five. Strip the AI branding away. What are they?

Spam content at scale.

That’s been the go-to shortcut on the web since there was a web. Article farms, content mills, programmatic SEO pages and “best of” roundups engineered to rank. Tried and true short-term garbage.

The difference in 2026 is the cost curve. AI made all the old tactics cheap enough that any marketing team can run them.

This is exactly why these tactics fit so neatly inside Lily Ray’s vicious cycle. They aren’t some new frontier that AI opened up. They’re the oldest shortcut in SEO, now available at a lower price point.

And just as the tactics are the same, the outcome will be the same. Google has already started cracking down on scaled AI content, and the penalty pattern she describes is playing out in real time: initial growth, then a visibility crash when an algorithm update lands.

If you’re a publisher thinking about which of these to experiment with, the right question isn’t “will it work?” The right question is “how many cycles do I want to buy into before I’m on the wrong side of one?”

The fourth tactic is something else

Tactic four — prompt injection hidden inside “summarize with AI” buttons — is ethically different from the other four.

The others are low-value. They add garbage to the web, which is rude, but the damage is mostly to the publisher’s own brand.

Prompt injection, by contrast, is actively negative. It’s an attempt to hijack someone else’s AI system and insinuate your recommendations into responses the user will see later. Microsoft’s security team classified this as a prompt injection attack and documented over fifty examples across thirty-one companies.

That’s not SEO, that’s a security issue, and in regulated industries like healthcare and finance it’s also a legal one.

Shame on anyone running this.

What if you were a publisher with no Google?

Here’s a thought experiment.

You run a magazine. Your archive has thirty thousand articles. You have unlimited resources. Now imagine Google doesn’t exist. What do you spend money on?

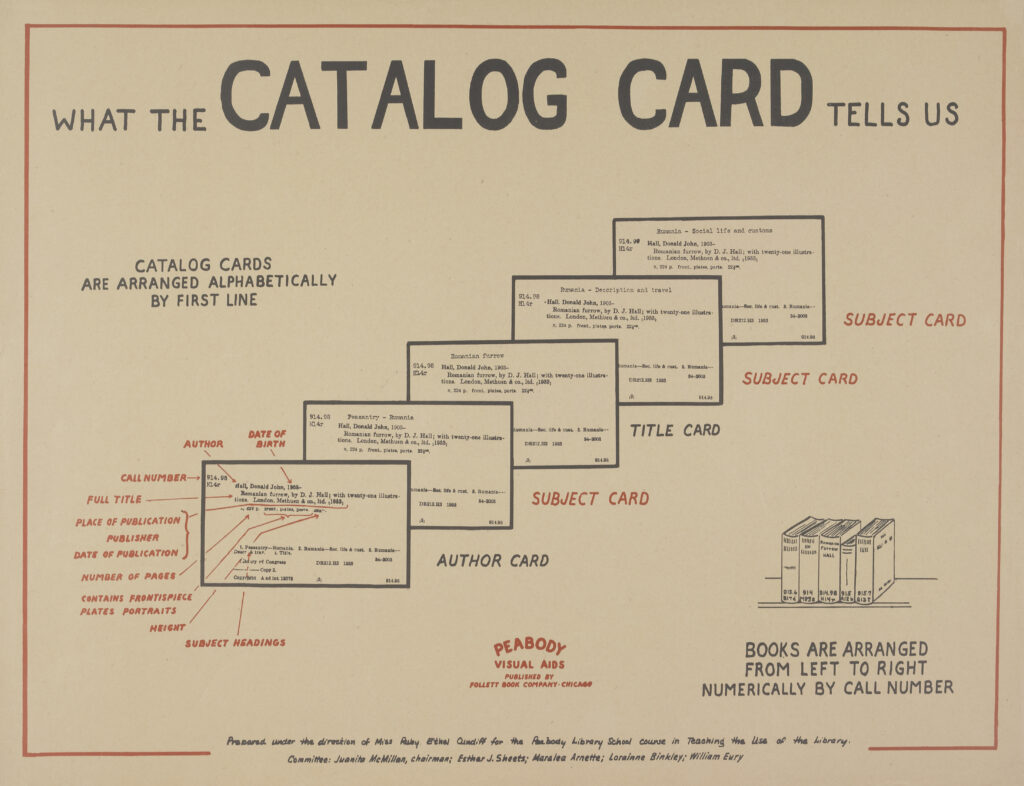

You’d hire a digital librarian. Probably a team of them. You’d want every article cataloged using a consistent controlled vocabulary, so that searches across your own archive actually worked. You’d want every person, organization, policy, and concept labeled the same way every time. No “Barack Obama” or “President Obama” or “Obama” chaos. No two or three tags that mean the same thing. No tags that gets used once and then forgotten.

You’d want this because it makes the archive more useful to your readers and to your editors. It helps surface the right related article at the end of a post. It makes the site searchable. It preserves institutional memory when staff turn over. It’s a good idea on its own merits, whether or not a search engine ever sees it.

The problem is that this has never been economically realistic for any publisher I’ve worked with. I’ve worked with publishers sitting on half a million articles. The tagging is a mess, and it’s no fault of theirs. There was never a human way to keep that much writing consistently labeled. Applying a clean controlled vocabulary across a working publication was never a job that was going to get done by hand.

That’s the work AI is actually good at. Not writing, not “refreshing,” but cataloging.

Google’s Knowledge Graph is LCSH for the web

Libraries solved the controlled-vocabulary problem decades ago. Library of Congress Subject Headings is a curated vocabulary used across research libraries to make the literature retrievable. It’s imperfect, it’s slow to update, and the cataloging profession will argue about it forever. But it works. You can walk into any major library and find the book you need because someone applied LCSH to it.

Google’s Knowledge Graph is the same idea, built for the web, and in one important way, better. LCSH is a flat list of terms. The Knowledge Graph is a graph — nodes and edges, entities and the relationships between them. When you tag “Marco Rubio” on an article, the Knowledge Graph already knows he’s the Secretary of State, a Republican, a former senator from Florida. You don’t have to build any of those connections yourself, they’re already there.

The Knowledge Graph isn’t perfect. It’s opaque in places, incomplete in others, and Google doesn’t publish everything it knows. But it is a universally accessible way to organize the web around topics that exists today and it’s the method Google itself uses.

If you care about AI visibility, organic rankings, Google News, or Google Discover—all of those systems lean on entity understanding. They lean on the Knowledge Graph.

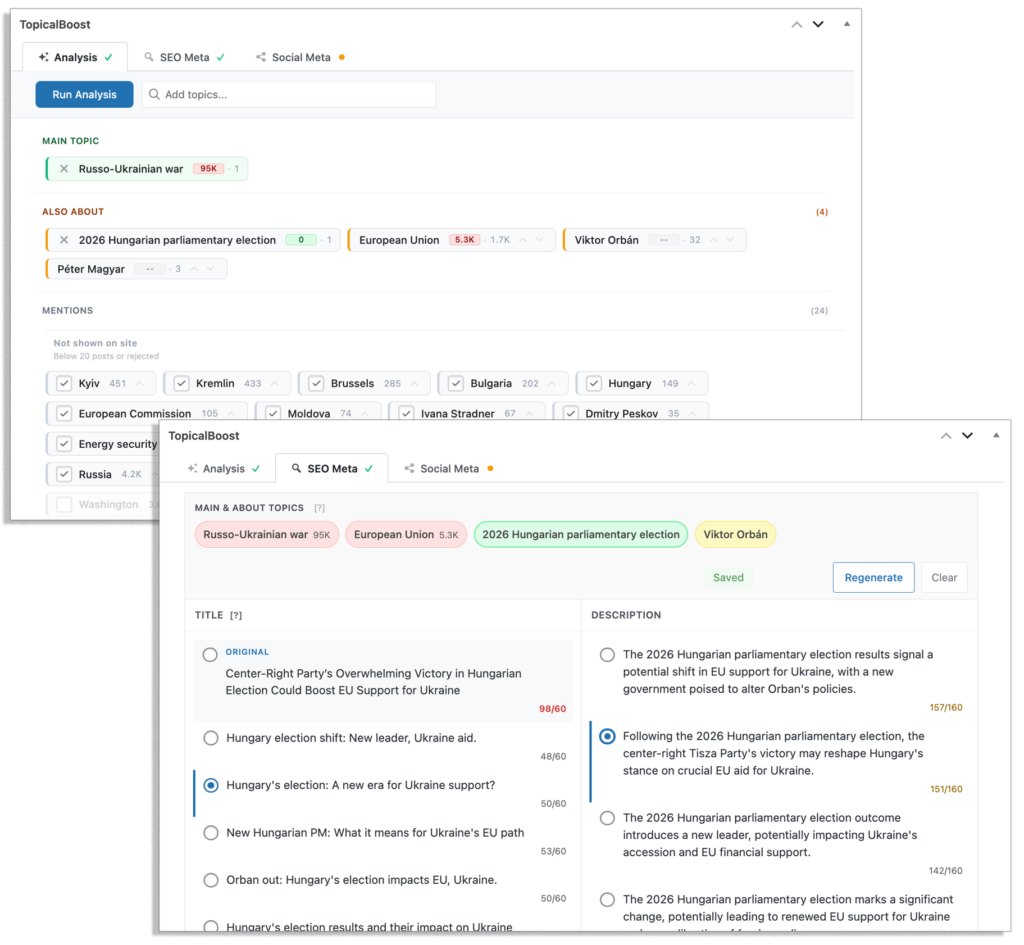

What this actually looks like

In practice, the work happens inside the CMS, while an editor is writing. TopicalBoost scans the draft, identifies entities, and shows the editor which ones are Main Topic, About, and Also Mentioned. An LLM suggests titles and meta descriptions shaped around the focus topic. On publish, internal links get created to topic archive pages, redistributing authority from older posts to newer ones. Schema.org markup connects the article to the right Knowledge Graph nodes.

The editor writes the article. The AI does the cataloging.

The payoff is what every publisher is trying to chase through GEO hacks, but without the hacks. More organic visibility, more AI Overviews citations, more Google Discover pickups, and more Top Stories placements. Check out our case studies on Illinois Policy or Washington Policy Center or the mini cases studies on Reason Magazine, FDD, and Pacific Legal Foundation.

But none of it comes at the cost of your archive. All the content is still human. Written by people who know the difference between how the world actually works and what merely sounds plausible. The AI isn’t generating copy. It’s organizing what humans already wrote.

Why this survives the cycle

Lily Ray’s point is that the durable strategies are the ones search engines can’t take away.

Applying a consistent controlled vocabulary to your own archive is about as take-away-proof as SEO gets.

Google isn’t going to penalize better tagging. Nobody gets demoted for connecting their articles to real-world entities with precision. The Knowledge Graph isn’t a loophole, it’s a system Google built specifically to reward publishers who do this well.

There are two ways to use AI in publishing. One is a new price point for a very old shortcut. It will follow the same trajectory as every shortcut before it. The other is a job that was never possible at publisher scale until now, and it happens to be exactly what Google has been quietly rewarding for years.

Choose accordingly.